Current MCP workflows often route full tool results through the agent. S2SP offers a selective-disclosure path for tabular results.

$

Eliminate Token Waste

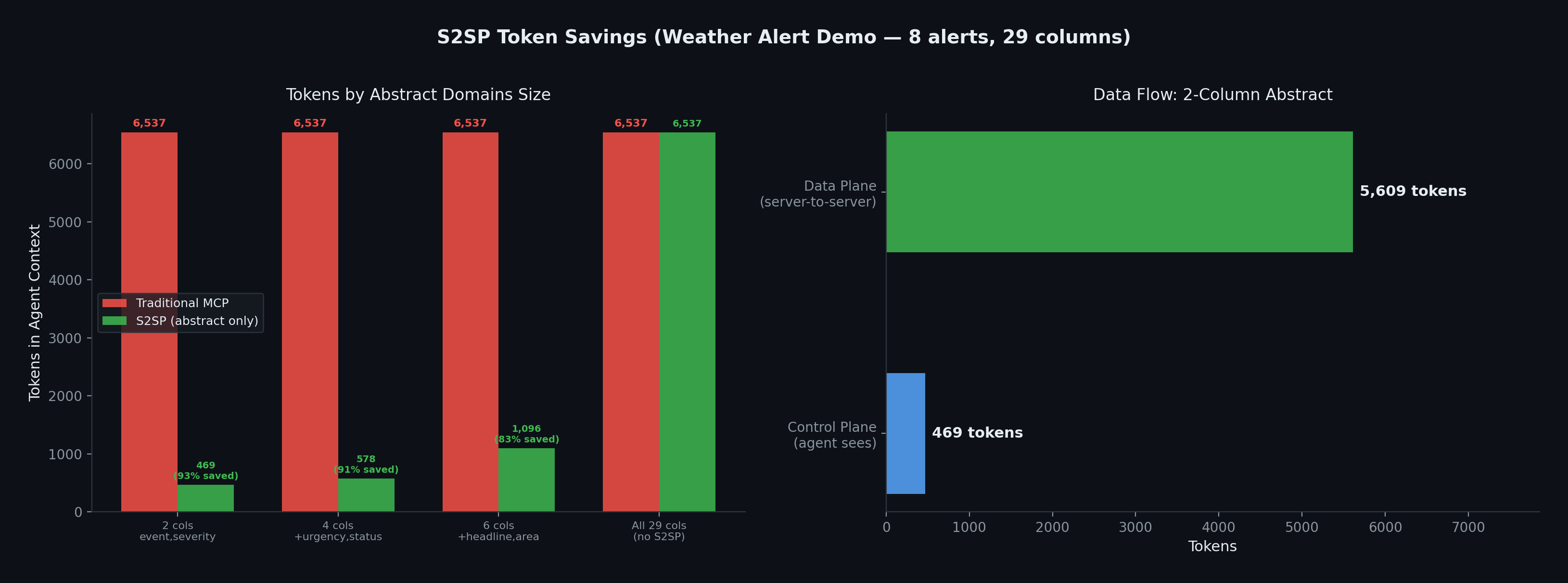

In the weather-alert demo, a 2-column abstract used 469 tokens instead of 6,537 for the full 29-column result. Actual savings depend on row count, column count, and selected abstract fields.

⚡

Measured Local Fetch

The demo's data-plane fetch completed in 7ms on localhost. Remote latency depends on the network path between the MCP servers.

🔒

Agent Stays in Control

The agent initiates and orchestrates all transfers. No data moves without agent authorization. The agent controls which servers receive each resource_url.

🔧

Small Dependency Set

The SDK uses FastMCP plus common Python HTTP components: Starlette, uvicorn, httpx, pydantic, and anyio.

🛡

Capability URLs

256-bit presigned URLs are single-use and use a 10-minute TTL by default. Production deployments should use TLS and appropriate server access controls.

↔

MCP-Compatible Path

S2SP uses standard MCP tools and JSON arguments. Traditional agents can call resource tools without abstract_domains and receive normal full results.